Ethical Risks of AI-Generated Figures and Visual Data in Academic Publishing: Accuracy, Misrepresentation, and Editorial Responsibility

Reading time - 7 minutes

Introduction

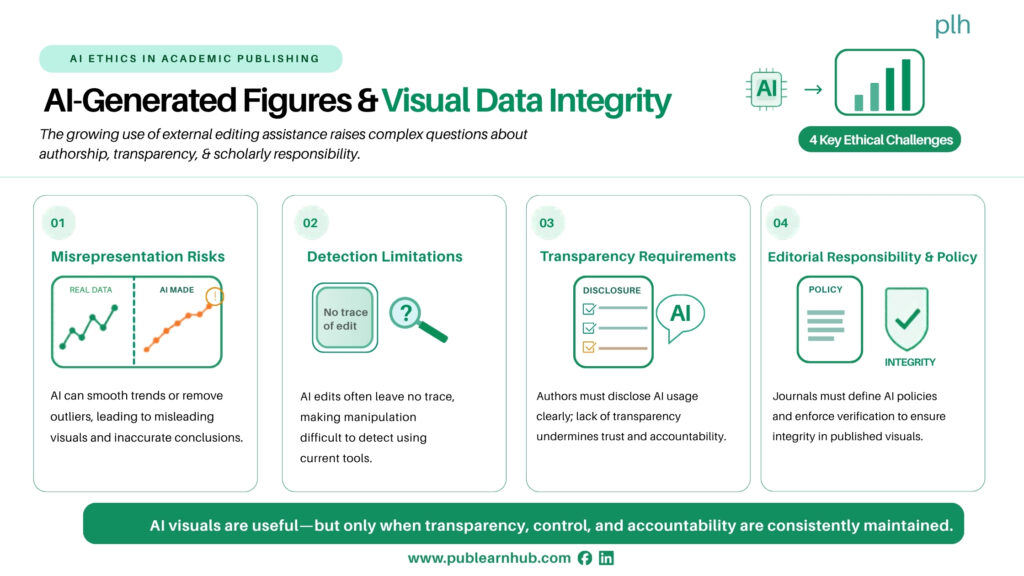

In the evolving landscape of academic publishing, artificial intelligence is transforming not only how research is written but also how it is visualized. From generating graphs and diagrams to enhancing images and creating entirely synthetic figures, AI-powered tools are increasingly being used to produce visual elements in research papers. While these technologies offer efficiency and aesthetic improvements, they also introduce complex ethical challenges that demand urgent attention.

Figures and visual data are central to scientific communication. They simplify complex findings, highlight trends, and often serve as the most accessible representation of research outcomes. However, when AI is involved in generating or modifying these visuals, questions arise about accuracy, authenticity, and the potential for subtle misrepresentation.

The Growing Role of AI in Visual Research Content

AI tools can now automatically generate charts from datasets, enhance low-quality images, and even simulate experimental results for illustrative purposes. Researchers may use these tools to save time, improve clarity, or make their work more visually appealing. In some cases, AI-generated visuals can help communicate findings more effectively than traditional methods.

However, the line between enhancement and fabrication can quickly blur. Unlike manual figure creation, where each step is directly controlled by the researcher, AI systems may introduce changes that are not fully transparent or easily traceable. This creates a risk that visuals may no longer accurately reflect the underlying data.

Risks of Misrepresentation

One of the most significant concerns is the potential for unintentional misrepresentation. AI-generated figures may smooth data trends, remove outliers, or enhance patterns in ways that make results appear more conclusive than they actually are. Even minor visual adjustments can influence how readers interpret findings.

For example, an AI-generated graph might automatically adjust scales or apply visual optimizations that exaggerate differences between groups. Similarly, image enhancement tools used in fields like biology or medical research may alter contrast or resolution in ways that obscure important details or create misleading impressions.

In more serious cases, AI tools could be used to generate entirely synthetic images or datasets that resemble real results. While such practices clearly cross ethical boundaries, they may be difficult to detect, especially as AI-generated content becomes more sophisticated.

The Challenge of Detection

Detecting issues in AI-generated visuals is significantly more complex than identifying textual problems. Traditional plagiarism detection tools are ineffective for images, and even experienced reviewers may struggle to identify subtle manipulations.

AI-generated figures often lack clear markers of alteration. Unlike manually edited images, which may leave traces of manipulation, AI outputs can appear seamless and realistic. This makes it harder for editors and reviewers to verify whether a visual accurately represents the original data.

As a result, journals face a growing challenge: how to ensure the integrity of visual content in an environment where manipulation is increasingly easy and difficult to detect.

Transparency and Disclosure

One of the most important steps toward addressing these risks is establishing clear expectations for transparency. Authors should be required to disclose the use of AI tools in generating or modifying figures, just as they are increasingly required to disclose AI assistance in writing.

Disclosure should include details about the type of tool used, the extent of its involvement, and whether the output was manually verified. This allows editors and reviewers to better assess the reliability of the visuals and ensures that readers are aware of how the figures were produced.

However, disclosure alone is not enough. Without clear standards, authors may underreport or inconsistently describe their use of AI tools. Journals must therefore provide specific guidelines on what constitutes acceptable use and what requires explicit acknowledgment.

Editorial Responsibility and Policy Development

Editors play a crucial role in maintaining the integrity of visual data. As AI-generated figures become more common, journals must develop policies that define acceptable practices and establish review protocols for visual content.

These policies should address key questions, such as:

- What level of AI assistance is permissible in figure creation?

- How should AI-generated visuals be labeled or documented?

- What verification steps are required before publication?

In addition, journals may need to invest in new tools and training for reviewers. This could include image forensics software, AI-detection systems, or specialized reviewer guidelines focused on visual integrity.

Balancing Innovation with Integrity

It is important to recognize that AI-generated visuals are not inherently problematic. When used responsibly, they can enhance clarity, improve accessibility, and support more effective communication of research findings. The challenge lies in ensuring that these benefits do not come at the cost of accuracy or trust.

Researchers must remain actively involved in the creation and verification of their figures. AI should be treated as a tool—not a substitute for scientific judgment. Every visual element in a paper should be carefully reviewed to ensure it faithfully represents the underlying data.

At the same time, journals must foster a culture of accountability. Clear policies, consistent enforcement, and transparent communication are essential for maintaining confidence in published research.

Looking Ahead

As AI continues to evolve, its role in academic publishing will only expand. Visual content, once considered a straightforward representation of data, is becoming a complex and potentially vulnerable component of the research process.

The future of scholarly communication will depend on how effectively the academic community addresses these challenges. By prioritizing transparency, strengthening editorial oversight, and promoting responsible use of AI, it is possible to harness the advantages of these technologies while safeguarding the integrity of the scientific record.

In a world where visuals often speak louder than words, ensuring their accuracy is not just a technical issue—it is a fundamental responsibility.